Custom Web Scraper + Admin Dashboard (DB + Export)

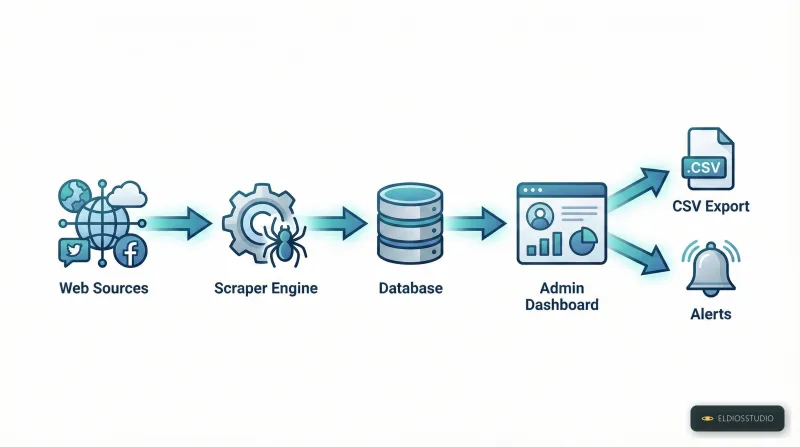

Need structured data from websites in a format you can actually use? I build complete systems that collect web data, store it in a database, and provide a clean admin dashboard for reviewing, searching, filtering, and exporting.

What you get

- Custom scraper built for your target sources and required fields

- Database storage (MySQL) with de-duplication and clean structure

- Admin dashboard for search, filters, pagination, and item details

- Review/approve workflow (optional) to validate data before export

- CSV export (custom columns and formats)

- Logging & reliability: retries, error logs, and safe parsing

Common use cases

- Price monitoring and product tracking

- Listings aggregation (public directories, marketplaces, catalogs)

- Competitor content monitoring (public pages)

- Lead collection from public business directories (where permitted)

- Change detection (new items, removed items, price changes) as an add-on

Optional add-ons

- Scheduling: hourly/daily/weekly runs

- Alerts: email/Telegram notifications for new items or changes

- Multi-source aggregation: combine multiple sources into one DB

- User login & roles: secure access to the dashboard

- Deployment: VPS/hosting deployment + documentation

What I need from you

- Target URLs and the exact fields you want (title, price, link, etc.)

- How often it should run (manual / scheduled)

- Your preferred export format (CSV columns) and any filters/rules

Note: This service is built for publicly available sources or sources you have the right to access. If you share the targets and requirements, I’ll propose the best setup and timeline.

Send me your requirements and I’ll confirm scope and the best plan for your project.